124 private links

N-grams have been a common tool for information retrieval and machine learning applications for decades. In nearly all previous works, only a few values of $n$ are tested, with $n > 6$ being exceedingly rare. Larger values of $n$ are not tested due to computational burden or the fear of overfitting.

In this work, we present a method to find the top-$k$ most frequent $n$-grams that is 60$\times$ faster for small $n$, and can tackle large $n\geq1024$. Despite the unprecedented size of $n$ considered, we show how these features still have predictive ability for malware classification tasks. More important, large $n$-grams provide benefits in producing features that are interpretable by malware analysis, and can be used to create general purpose signatures compatible with industry standard tools like Yara. Furthermore, the counts of common $n$-grams in a file may be added as features to publicly available human-engineered features that rival efficacy of professionally-developed features when used to train gradient-boosted decision tree models on the EMBER dataset.

With the increasing number of scientific publications, the analysis of the trends and the state-of-the-art in a certain scientific field is becoming very time-consuming and tedious task. In response to urgent needs of information, for which the existing systematic review model does not well, several other review types have emerged, namely the rapid review and scoping reviews.

The paper proposes an NLP powered tool that automates most of the review process by automatic analysis of articles indexed in the IEEE Xplore, PubMed, and Springer digital libraries. We demonstrate the applicability of the toolkit by analyzing articles related to Enhanced Living Environments and Ambient Assisted Living, in accordance with the PRISMA surveying methodology. The relevant articles were processed by the NLP toolkit to identify articles that contain up to 20 properties clustered into 4 logical groups.

The analysis showed increasing attention from the scientific communities towards Enhanced and Assisted living environments over the last 10 years and showed several trends in the specific research topics that fall into this scope. The case study demonstrates that the NLP toolkit can ease and speed up the review process and show valuable insights from the surveyed articles even without manually reading of most of the articles. Moreover, it pinpoints the most relevant articles which contain more properties and therefore, significantly reduces the manual work, while also generating informative tables, charts and graphs.

In a nutshell, it is a type of statistical model used for tagging abstract “topics” that occur in a collection of documents that best represents the information in them.

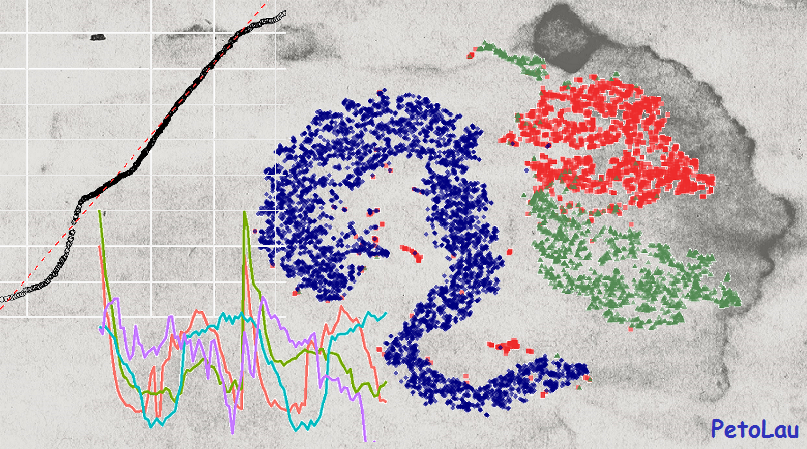

Many techniques are used to obtain topic models. This post aims to demonstrate the implementation of LDA: a widely used topic modeling technique.

pyLDAvis is a python library for interactive topic model visualization. It is a port of the fabulous R package by Carson Sievert and Kenny Shirley. They did the hard work of crafting an effective visualization. pyLDAvis makes it easy to use the visualiziation from Python and, in particular, Jupyter notebooks.

To learn more about the method behind the visualization, it is possible to read the original paper explaining it.

This notebook provides a quick overview of how to use pyLDAvis.

This article introduces how to build a Python and Flask based web application for performing text analytics on internet resources such as blog pages. To perform text analytics I will utilizing Requests for fetching web pages, BeautifulSoup for parsing html and extracting the viewable text and, apply the TextBlob package to calculate a few sentiment scores.

Anonymization has been the main means of addressing privacy concerns in sharing medical and socio-demographic data. Here, the authors estimate the likelihood that a specific person can be re-identified in heavily incomplete datasets, casting doubt on the adequacy of current anonymization practices.

A curated list of applied machine learning and data science notebooks and libraries across different industries.

Deep learning techniques have become the method of choice for researchers working on algorithmic aspects of recommender systems. With the strongly increased interest in machine learning in general, it has, as a result, become difficult to keep track of what represents the state-of-the-art at the moment, e.g., for top-n recommendation tasks. At the same time, several recent publications point out problems in today's research practice in applied machine learning, e.g., in terms of the reproducibility of the results or the choice of the baselines when proposing new models.

In this work, we report the results of a systematic analysis of algorithmic proposals for top-n recommendation tasks. Specifically, we considered 18 algorithms that were presented at top-level research conferences in the last years. Only 7 of them could be reproduced with reasonable effort. For these methods, it however turned out that 6 of them can often be outperformed with comparably simple heuristic methods, e.g., based on nearest-neighbor or graph-based techniques. The remaining one clearly outperformed the baselines but did not consistently outperform a well-tuned non-neural linear ranking method.

Overall, our work sheds light on a number of potential problems in today's machine learning scholarship and calls for improved scientific practices in this area. Source code of our experiments and full results are available at: https://github.com/MaurizioFD/RecSys2019_DeepLearning_Evaluation.

A vegetable-picking robot that uses machine learning to identify and harvest a commonplace, but challenging, agricultural crop has been developed by engineers.

Your new best friend built with an artificial neural network - olivia-ai/olivia

In research & news articles, keywords form an important component since they provide a concise representation of the article’s content. Keywords also play a crucial role in locating the article from information retrieval systems, bibliographic databases and for search engine optimization. Keywords also help to categorize the article into the relevant subject or discipline.

Conventional approaches of extracting keywords involve manual assignment of keywords based on the article content and the authors’ judgment. This involves a lot of time & effort and also may not be accurate in terms of selecting the appropriate keywords. With the emergence of Natural Language Processing (NLP), keyword extraction has evolved into being effective as well as efficient.

And in this article, we will combine the two — we’ll be applying NLP on a collection of articles (more on this below) to extract keywords.

In this post we’ll explore how we can derive logistic regression from Bayes’ Theorem. Starting with Bayes’ Theorem we’ll work our way to computing the log odds of our problem and the arrive at the inverse logit function. After reading this post you’ll have a much stronger intuition for how logistic

In the midst of the deep learning hype, p-values might not be the hottest topic in data science. However, association mapping remains a fundamental tool to justify and underpin scientific conclusions. Inspired by an approach for time series classification based on predictive subsequences (i.e shapelets [1]), we developed S3M, a method that identifies short time series subsequences that are statistically associated with a class or phenotype while tackling the multiple hypothesis problem.

I created an Instagram page that showcased pictures of New York City’s skylines, iconic spots, elegant skyscrapers — you name it. The page has amassed a following of over 25,000 users in the NYC area and it’s still rapidly growing.

You will learn in this post how to:

- decompose double-seasonal time series

- detrend time series

- model and forecast double-seasonal time series with trend

- use two types of simple regression trees

- set important hyperparameters related to regression tree

This article focuses on using a Deep LSTM Neural Network architecture to provide multidimensional time series forecasting using Keras and Tensorflow - specifically on stock market datasets to provide momentum indicators of stock price.

The following article sections will briefly touch on LSTM neuron cells, give a toy example of predicting a sine wave then walk through the application to a stochastic time series. The article assumes a basic working knowledge of simple deep neural networks.

Generates random text from Markov chains of tagged source text.

An example text is included which was derived from Plato's Ion:

Have you already forgotten what you were saying?

A rhapsode ought to interpret the mind of the poet.

For the rhapsode ought to interpret the mind of the poet.

For the poet is a light and winged and holy thing,

and there is Phanosthenes of Andros,

and Heraclides of Clazomenae,

whom they have also appointed

to the command of their armies and to other offices,

although aliens, after they had shown their merit.

And will they not choose Ion the Ephesian to be their general,

and honour him, if he prove himself worthy?I recently wrote a Markov chain package which included a random text generator. The generated text is not very good.

The rest of this post covers the evolution of the main algorithm.

fakernews builds a markov chain using the top 500 post titles on HN and generates fake HN posts.

This is an example program to demonstrate the capabilities of a Golang library to build Markov models.